Spark 2.4.6集群部署

来源:原创

时间:2020-07-26

作者:脚本小站

分类:Linux

安装spark之前先安装hadoop集群。

spark下载地址:

https://downloads.apache.org/spark/

下载安装包:

wget https://downloads.apache.org/spark/spark-2.4.6/spark-2.4.6-bin-hadoop2.7.tgz

安装包复制到各个节点:

scp spark-2.4.6-bin-hadoop2.7.tgz root@hadoop-node1:/root

解压安装:

tar -xf spark-2.4.6-bin-hadoop2.7.tgz -C /usr/local/ cd /usr/local/ ln -sv spark-2.4.6-bin-hadoop2.7/ spark

配置环境变量:

cat > /etc/profile.d/spark.sh <<EOF export SPARK_HOME=/usr/local/spark export PATH=$PATH:$SPARK_HOME/bin EOF . /etc/profile.d/spark.sh

配置工作节点:这里将master节点也作为工作节点。

cat > /usr/local/spark/conf/slaves <<EOF hadoop-master hadoop-node1 hadoop-node2 EOF

复制配置文件:

cp /usr/local/spark/conf/spark-env.sh.template /usr/local/spark/conf/spark-env.sh

修改环境变量:spark会先加载这个文件里的环境变量

cat >> /usr/local/spark/conf/spark-env.sh <<EOF export SPARK_MASTER_HOST=hadoop-master EOF

修改属组属主:

cd /usr/local/ chown -R hadoop.hadoop spark/ spark

复制配置到其他节点:

scp ./* root@hadoop-node1:/usr/local/spark/conf/ scp ./* root@hadoop-node2:/usr/local/spark/conf/

启动master节点:使用hadoop用户启动。

su hadoop ~]$ ./start-master.sh starting org.apache.spark.deploy.master.Master, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.master.Master-1-hadoop-master.out

查看主节点运行的进程:

~]$ jps 5078 Master 5163 Worker ...

启动worker节点:

]$ ./start-slaves.sh hadoop-node1: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-hadoop-node1.out hadoop-node2: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-hadoop-node2.out

node1节点:

~]$ jps 2898 Worker ...

同时启动master和node节点:

]$ ./start-all.sh starting org.apache.spark.deploy.master.Master, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.master.Master-1-hadoop-master.out hadoop-master: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-hadoop-master.out hadoop-node2: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-hadoop-node2.out hadoop-node1: starting org.apache.spark.deploy.worker.Worker, logging to /usr/local/spark/logs/spark-hadoop-org.apache.spark.deploy.worker.Worker-1-hadoop-node1.out

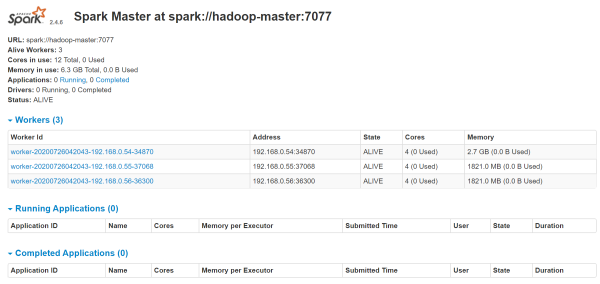

web页面:

http://192.168.0.54:8080/